A Code Quality Story – Analysis Using Code Metrics

I wrote this article to help others and share my tech knowledge solutions using Visual Studio Enterprise 2017 test frameworks.

Running an analysis called “Code Metrics” on your Visual Studio solution or project can help you discover issues before it is released. Code Metrics Analysis in Visual Studio will calculate statistics on the maintainability, complexity, inheritance, class coupling, and number of lines of code and help guide you to the specific areas of code (ex: a method) that could be improved. Keep in mind, everyone has different opinions of good Code Quality, and there are situations where meeting targeted “numbers” for Code Metrics might not be appropriate for your projects.

Let’s dive in and get started with a story.

Madame Future Seer decided to make a simple Fortune Teller program [link]. In a rush to deliver and showcase her product for release, she did not take time to test or inspect her code for problems. It compiled without errors, right? She manually tested all different user input scenarios (great job!) so why should she care about running Code Metrics on her project?

Madame Future Seer decided to make a simple Fortune Teller program [link]. In a rush to deliver and showcase her product for release, she did not take time to test or inspect her code for problems. It compiled without errors, right? She manually tested all different user input scenarios (great job!) so why should she care about running Code Metrics on her project?

Let’s see and take a look at what could be problems…

She loads her project into Visual Studio.

Madame was eager to get her unique Code Metric results so in Visual Studio she clicked on:

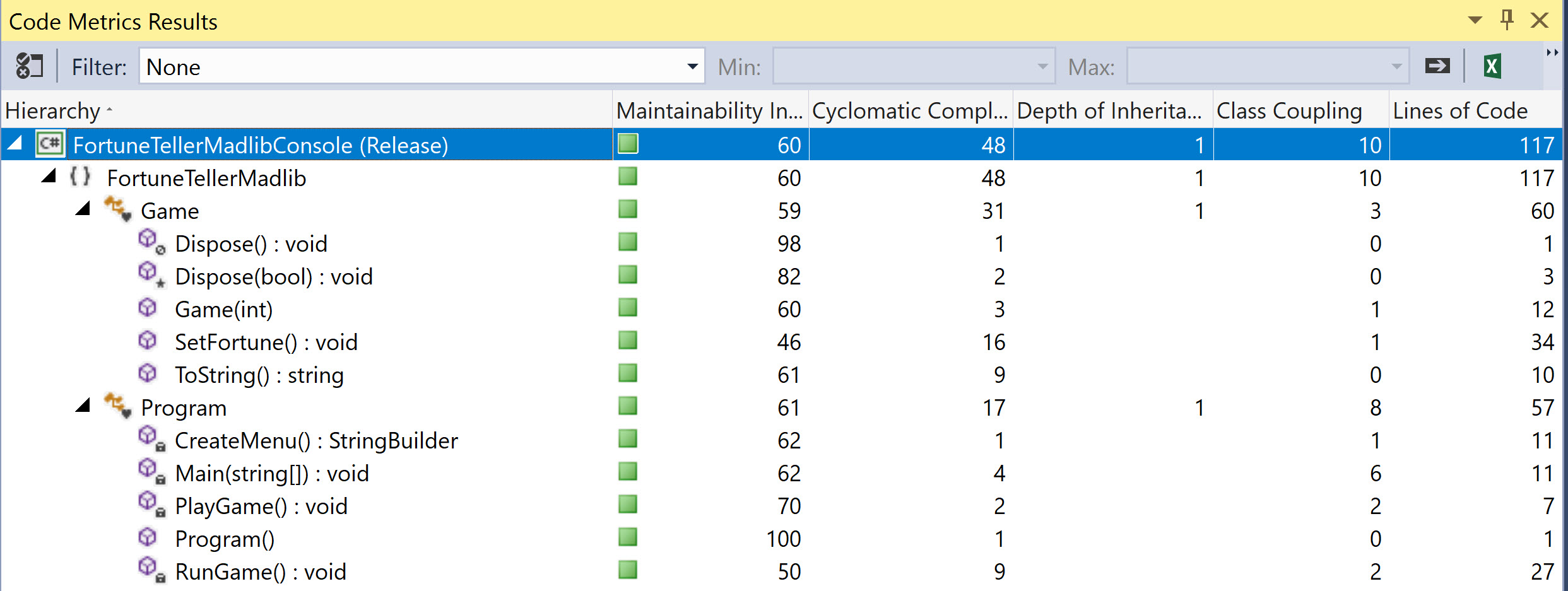

Menu -> Analyze -> Calculate Code Metrics -> On Solution

Madame Seer looked into her Crystal Ball for guidance on interpreting these results and understanding how these results applied to her code. The entity in her crystal ball, an “artificial intelligence”, searched the Internet and learned all about Code Metrics. For humorous effect, the crystal ball morphed into a Magic 8 Ball to tell Madame Seer what it thought of her Code Quality based in its study of the Code Metrics Analysis and her individualized report… “Your Code Good”.

Madame Seer was excited and relieved at the same time! But she did not want to remain ignorant and asked her Crystal Ball to share the knowledge on how it interpreted her project’s Code Metrics. Her Crystal Ball agreed and began to explain each category of the Visual Studio Code Metrics report.

Cyclomatic Complexity

“Your program code overall performed well according to Code Metrics”, complimented her Crystal Ball, “However, your code’s cyclomatic complexity is little too high to say it’s great,” snapped the ball.

Madame learned that cyclomatic complexity [link] is really a measure of the number of linear paths available by use of control/decision points in her program. If she uses too many nested IF/ELSE statements, then her cyclomatic complexity would increase and so would the amount of unit tests!

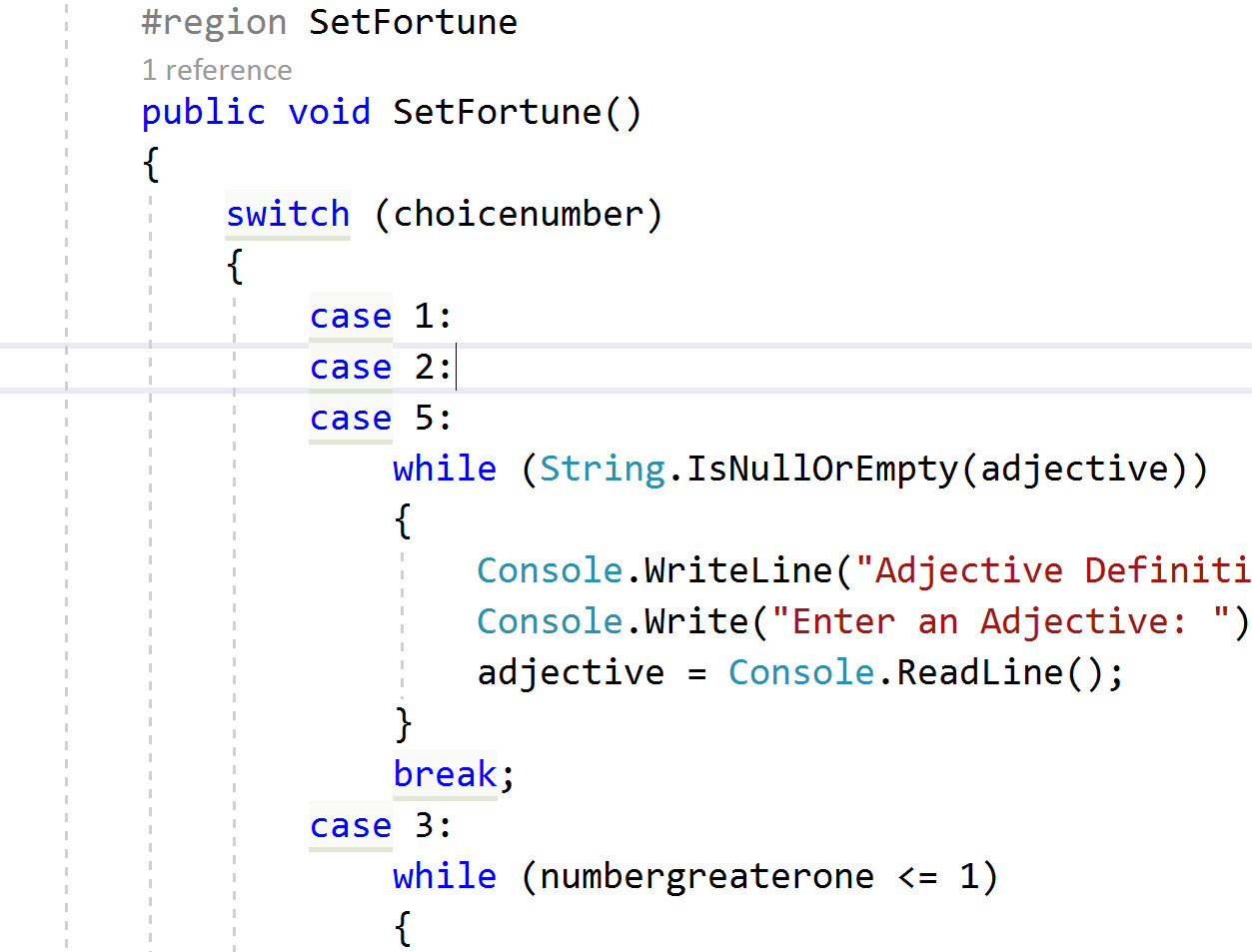

With a Cyclomatic Complexity score of 31, Madame Seer knew something was unusual in her Game class member so she went to take a look and investigate. She had set the program flow via many switch statements and embedded while loops to make sure they enter good data through the Console window. She could make each switch statement call a separate method but it would still have to return results based on the user fortune, and each user choice is a cyclomatic path – a catch-22 for her software application.

Perhaps there could be some code efficiency gained but she could not See another way to make her code for fortune choice more efficient to reduce the cyclomatic complexity! The more Madame Seer looked at her code, the more she questioned this metric. The code did look maintainable and simple to her.

Madame Seer did more research on cyclomatic complexity and realized that this metric for software quality has different interpretations and opinions among the developers community [link]. She learned the metric has a history and place for specific applications but a developer should not have to blindly meet cyclomatic complexity metric numbers because one should also take a holistic approach at their code, application, testing, and maintainability in interpreting the metrics.

She discussed her research and finding with her AI crystal ball entity but it had difficulty in understanding what a holistic approach was since it was not clearly defined by metrics, numbers, if/else statements, and a true/false point of view which only a machine could understand.

Depth of Inheritance

Glancing over her code metrics report, Madame Seer found her program had a low depth of inheritance, which meant her program did not have a deep hierarchy of class definitions (a similar analogy to a small family living together versus 3 generations in one house). A deep hierarchy would had made her code hard to understand and difficult to maintain.

Class Coupling

Class coupling measures interdependence on other types like variables, parameters, fields, etc. High coupling would mean her fortune teller application would be difficult to maintain but fortunately she kept it simple.

Lines of Code

Lines of code measure the intermediate language [link] lines of code, not lines of source code. This metric could help Madame find areas in her code that are candidates for refactoring lengthy code into a method for better readability, etc.

Maintainability Index

“Excellent job overall you have a maintainability index of 60”, complimented her Crystal Ball, “The Maintainability Index is a combined metric that takes into account the Halstead volume, cyclomatic complexity, and lines of code.” Madame Seer learned that in general, any number over 20 was good and she was delighted her code was indexed at 60.

Conclusion

Every story has a happy ending. Madame Seer used Code Metrics to help guide her continuous improvement for her software programming. She released her Fortune Teller Console program to her fans and made a fortune while her program users got theirs.

Every story has a happy ending. Madame Seer used Code Metrics to help guide her continuous improvement for her software programming. She released her Fortune Teller Console program to her fans and made a fortune while her program users got theirs.