Using Perl to Execute SQL on Data

Introduction

I had previously developed a complex database query solution for a consulting client of mine so they could query data in a hierarchical SQL database on their Linux servers to other formats so that it could be used for reporting and analysis without the use of expensive licensed products. I chose Perl at the time because the client had the ability to run this on their Linux servers where their databases resided and then easily exported the results to their Windows and other applications. Perl scripts work well together on Linux systems so this was a good match for the needs.

For this project demo, I decided to strip about 90% of the code delivery solution and generalize the architecture and coding techniques I used to solve the problem of using Perl and embedded SQL to query a dataset, convert to CSV, and output the results.

The Power of Perl

Perl is a scripting programming language that may be all forgotten or passed over with the beginner’s hype surrounding the easy to learn Python language. Take a little effort into learning the powers of Perl and you will find it is more capable in SQL, text manipulations, and a strong community of knowledgeable engineers that continuously add, update, and improve the language along with many additional modules (“libraries”) that you can import that expand or simplify the baseline code capabilities. Learn more about Perl here.

Architecture Mapping

Inputs

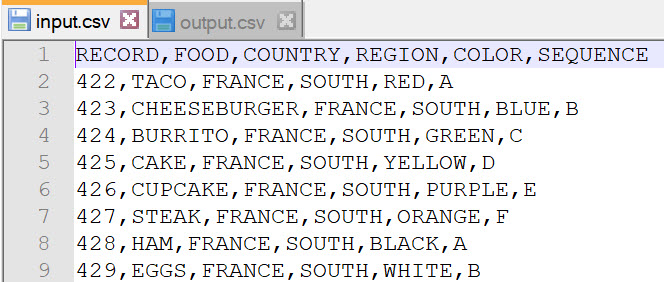

In this project, it assumes the dataset is in a simple CSV file. The first line of the CSV file is the header that contains the fields or columns of the data. The example file in the code repo (see bottom of page) is called input.csv and contains the entire dataset that we will be working with.

Here is the first row that contains the identifying fields or columns of information:

Let me briefly describe this example dataset:

RECORD: A positive integer value representing the record primary key or ID

FOOD: A text-based identifier of a food item

COUNTRY: A text-based identifier of which country category the data belongs to

REGION: A text-based identifier of which region the data belongs to

COLOR: A text-based identifier of what color code the data is (just for demo)

SEQUENCE: A,B,C… letter identifier of a sequence (just for the demo)

Here is an example of a couple of lines of the dataset:

424,BURRITO,FRANCE,SOUTH,GREEN,C

436,CHICKEN,FRANCE,SOUTH,GREEN,C

448,EGGS,FRANCE,SOUTH,GREEN,C

460,TACO,FRANCE,SOUTH,GREEN,C

472,PEACHES,FRANCE,SOUTH,GREEN,C

484,STEAK,FRANCE,SOUTH,GREEN,C

496,PASTA,FRANCE,SOUTH,GREEN,C

SQL

Any valid SQL statement can be executed on the dataset. This is the specific SQL statement that was executed in the demo script:

my @col = qw(RECORD FOOD COUNTRY REGION COLOR SEQUENCE);

my $cols = join “,”,@col;

select $cols from input.csv where COLOR like ‘GREEN%’ AND COUNTRY like ‘FRANCE%’

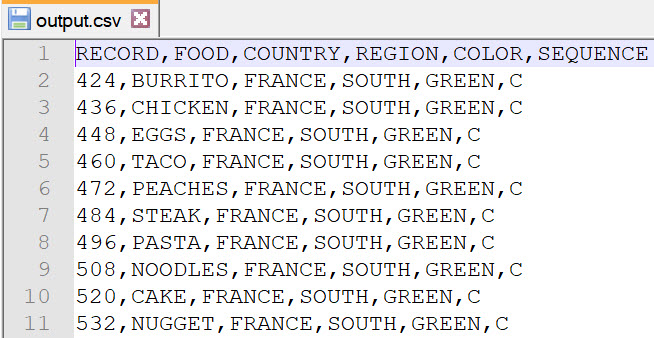

Outputs

The end result will be filter to COLOR fields like GREEN and COUNTRY like FRANCE

Scripting

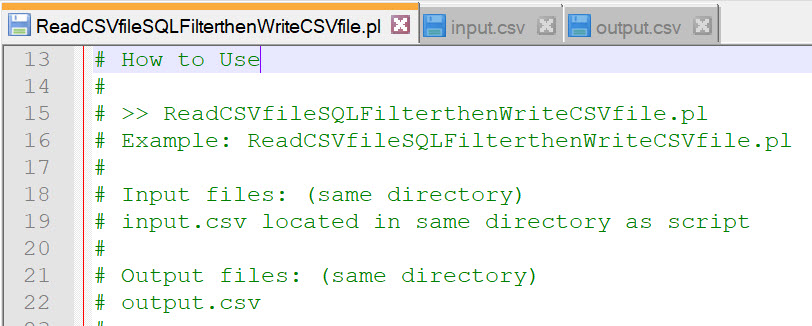

The main programming script for this project example is ReadCSVfileSQLFilterthenWriteCSVfile.pl which is included in the project code repo (see bottom of page). You may modify the scripting file for your personal or business objectives. This script discussion assumes basic familiarity with Perl. This project article is meant to “demo” and not “teach” a Perl project.

Perquisites

Perl installation and appropriate permissions to read/write to your working directory. Also, you may need to procure (free) additional modules for the time and DBA (SQL) functions. Here are the project module (library) dependencies:

use DBI;

How to Use

Description of the File

#!/usr/bin/perl

#=======================================================================

# ReadCSVfileSQLFilterthenWriteCSVfile

# File: ReadCSVfileSQLFilterthenWriteCSVfile.pl

#

# Description: Simple perl script to import a CSV file (contains records

# of data – could be a dump from a database), execute custom SQL,

# and write back to a CSV file.

#

# This script uses an example of SQL to filter out select records

# DBI is used in this example

Bonus SQL Example

There is an example of how to query, select, and order by specific fields (First country, then region in this example) DBI and SQL commands.

select RECORD,FOOD,COUNTRY,REGION,COLOR,SEQUENCE from input.csv where COLOR like ‘GREEN%’ AND FOOD like ‘TACO%’ ORDER BY COUNTRY,REGION

Code

The code project is not an open collaborative or maintained project, so please do not contact me regarding “improvements” other than bugs or corrections to my article and or information contained in the code repo.

Click here to access the project on my GitHub account.